More of the Disease, Faster

What happens when you ask an LLM to find you an edge

This week I discovered the “vibe quant” movement (or rather, it discovered me). People using LLMs to find trading strategies, validate them, and put them into production. The pitch is seductive: the LLM reads the literature, implements the ideas, backtests them, and you just supervise.

I think this approach is going to cost people a lot of money. And worse, it’s going to prevent them from ever actually learning to trade.

Fair warning: this article contains some opinions that I suspect will be unpopular. I’m going to argue that most public trading content is rubbish, that the academic literature isn’t much better, and that LLMs are systematically incapable of teaching you the one thing that actually matters.

I know that’s a lot. But I’ve been doing this long enough that I’m fairly confident in these positions, and I think it’s worth saying out loud.

Before I get into the details, I want to put the punchline up front, because I think it’s the most important idea in this article.

When you do trading research properly (hypothesise, test, learn, iterate), each cycle teaches you something about market structure, about counterparties, about what kinds of constraints create opportunities.

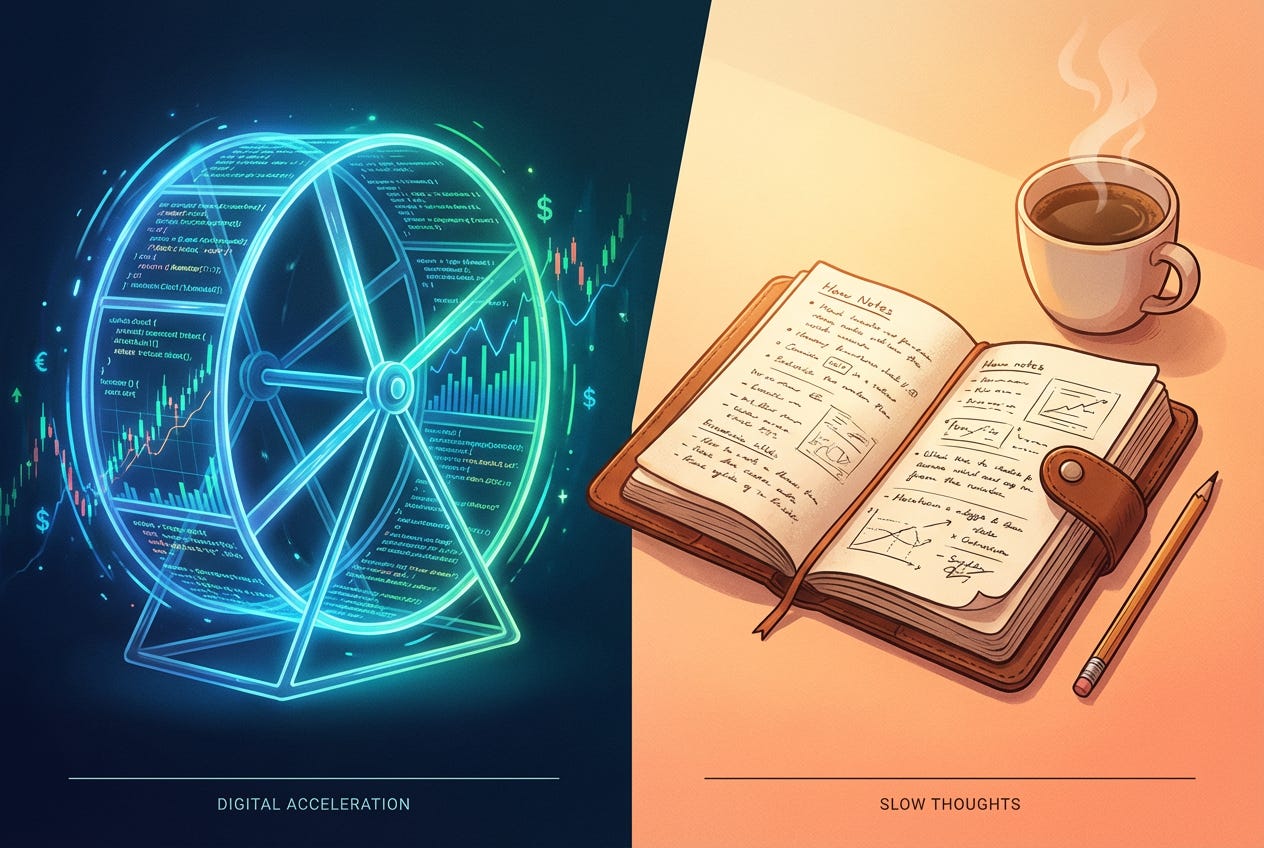

That understanding makes the next cycle better. It’s a flywheel, and it compounds.

The vibe quant approach skips the learning. The LLM does the thinking, so the trader’s understanding is exactly as shallow on day 1,000 as on day 1. You end up permanently dependent on the machine, with no ability to evaluate whether the patterns it finds are real, persistent, or tradeable.

It’s a treadmill, literally going nowhere. The LLM just speeds up the belt.

Most beginners find themselves on this treadmill. In Chapter 2 of my case study, I describe the “backtest cycle of doom”: change a parameter, add a stop loss, optimise a filter, repeat until you’ve designed your perfect equity curve. They think they’re doing research. They aren’t. They’re doing something that feels like research because it’s technical and requires skill, but:

Backtesting is an entirely technical problem, requiring little to no nuanced thinking or decision-making in the face of uncertainty. It doesn’t require wrestling with difficult problems. In that sense, it’s easy. And I was being seduced that I could “solve trading” by doing something easy.

The vibe quant movement is exactly this, just turbocharged. The LLM makes the easy part even easier, and even more seductive. But the hard part, the part that actually matters, doesn’t get done at all.

To see why, we need to talk about what the LLM actually can’t do.

When I look at any potential trade, the first question I ask is: who’s losing money on the other side of this, and why will they keep doing it?

That’s the game. Edge comes from structural constraints, stuff other participants can’t or won’t do because of mandate restrictions, capacity limits, or operational awkwardness. If you can’t answer that question, you don’t have an edge.

The problem is that this question is almost entirely absent from public trading content. The vast majority of what’s out there is rubbish. Absolute rubbish. It’s all patterns, signals, and backtests, passed off as if it actually means something useful.

Even the academic literature is full of papers that exist because the author wanted to demonstrate they could speak the language of mathematics, not because they had anything useful to say about how markets actually work.

“Why would anyone lose money on the other side of this trade?” barely gets asked. And it’s the most important question.

LLMs are trained on this corpus.

So when you ask one to help you find an edge, it does what it knows: it finds patterns, builds strategies, and runs backtests. It skips the most important part, because the most important part is barely represented in its training data.

I recently wrote about the four hats of the solo trader, and a reader who calls himself “Vibe Quant” fed the article to an LLM. I want to be clear: this bloke is obviously smart, he’s thinking carefully about his process, and he shared his experience generously. I’m not having a go at him. But what happened next is a perfect illustration of the problem, and it’s worth unpacking.

The LLM’s self-diagnosis was genuinely impressive:

“The temptation isn’t to blur Hats 1 and 2. It’s to skip Hat 1 entirely (“Hat 1” represents the edge-first thinking I’m banging on about). The LLM can read a paper on accrual anomalies at 6am, implement a multi-quarter earnings composite by 6:15, wire it into a screener by 6:30, and cite it in a morning briefing by 7. That’s pure Hat 2 engineering at inhuman speed. But ‘did we verify this edge exists in our universe, at our holding period, net of our constraints’ never happened. We built the bridge without testing the soil.”

Spot on. The machine nailed the diagnosis.

But then look at what happened next.

Having identified that edge research was being skipped, the LLM “set off to develop some new internal guidance systems for itself and some Python code to help validate.”

In short, it identified the disease, and then prescribed more of the disease. Faster.

And that’s not even a bug! It’s the default behaviour!

You tell the LLM to think about mechanism and edge, and it does what it knows: more implementation, more backtesting, more engineering. It gives you a plausible-sounding mechanism, sure. But it’s synthesising from a corpus where genuine edge thinking is drowned out by noise and grift. It can’t know that. It just sounds authoritative, because that’s what it does.

To have real confidence in any edge, you need two things: a plausible mechanism and evidence in the data.

The mechanism answers: why does this exist? Who’s the counterparty? What are their constraints, and why will they keep being there? Why can I compete for this edge?

The evidence side is more straightforward to explain: does the data actually support this?

You need both.

Without a mechanism you’re just curve-fitting. And a nice story about why something should work isn’t enough either; you need to see it in the data.

An LLM can help you with the evidence side (pull data, design experiments, build visualisations). But it can’t teach you to think about mechanism, because the thinking it draws on doesn’t ask that question.

This matters enormously when things go wrong. When a strategy enters a drawdown (and it will), if you understand the mechanism, you can ask: has the counterparty adapted? Has the structural constraint been removed? You have a framework for deciding whether to sit tight, adapt, or walk away.

If your entire basis for the trade is “the LLM found a pattern and wrote some validation code,” you’ve got nothing. You can’t diagnose or adapt. Your only move is to go back to the LLM and ask it to find another pattern.

You’ll have constant anxiety that your edge isn’t real (you don’t know what it’s based on, and deep down you know that making money trading is harder than asking an LLM to do it for you), and so you’ll tinker and backtest and wonder and fret ad nauseam when you could be doing the productive work that gets you zeroing in on real edges.

But it’s even worse than that.

Remember what I said up front? This is where it really bites.

A trader who works through the full hypothesise-test-learn-iterate loop, even slowly, even painfully (and honestly, it is painful sometimes), accumulates genuine understanding.

Each edge you research teaches you something. That understanding compounds. The next piece of research is better because of everything you learned from the last one.

The vibe quant approach has no compounding.

There’s no learning loop, because the LLM did the thinking. You’re just as dependent on day 1,000 as on day 1.

And that’s the bit that I think people aren’t seeing. The problem isn’t just that the LLM might find bad patterns. It’s that even if it finds good ones, you’ll never develop the judgement to know the difference.

You’re running faster, but you’re not going anywhere.

Look, I’m not anti-LLM. I use them heaps. They’re brilliant for the grunt work: cleaning data, writing code, interpreting research, designing experiments. Hat 2, Hat 3, Hat 4 stuff. They make me faster at things I already know how to do.

But they can’t teach you the thing that actually matters, which is how to think about edge. That comes from working with people who do it for a living, who ask “who’s on the other side?” as a reflex. It comes from developing a good mental model of the market, its players and their constraints. It comes from the hypothesise-test-learn loop, done over and over.

The LLM can hand you a fish. It can even build you a very impressive fishing rod. But it can’t teach you where the fish are, because almost nobody who knows is writing about it on the internet.

“When I look at any potential trade, the first question I ask is: who’s losing money on the other side of this, and why will they keep doing it?

That’s the game. Edge comes from structural constraints, stuff other participants can’t or won’t do because of mandate restrictions, capacity limits, or operational awkwardness. If you can’t answer that question, you don’t have an edge.”

Incredible stuff and truly eye-opening for an aspiring trader. Thank you for this article.

Great piece, Kris! I’m convinced that anchoring LLMs to curated knowledge bases—such as peer-reviewed papers and books—is the key to establishing a 'positive bias.' Standard self-supervised training often struggles with the sheer volume of low-quality data on the open web; prioritizing verified literature provides the necessary grounding that raw internet data simply lacks.