AI Will Create Millions of Quants

Most of Them Will Lose Money

AI makes it easier than ever to build trading strategies.

Prompt a model, run a backtest, optimise some parameters, and suddenly you’ve got a beautiful equity curve staring back at you. It feels like progress. It feels like research.

I wrote recently about how AI coding assistants tend to prescribe “more of the disease, faster”, skipping the learning that makes trading research actually valuable. This article is the bigger picture version of that argument.

Because something interesting is happening. The barriers to building quantitative trading strategies are collapsing. And that sounds like it should be great news.

It isn’t.

AI makes strategy creation trivial.

Think about what you used to need to build and test a trading strategy: coding ability, data access, infrastructure, technical knowledge. Acquiring all of that took months or years.

Now you can prompt an LLM and have a coded, backtested strategy in minutes. That’s a real shift. I’m not dismissing it.

But lowering the cost of experimentation doesn’t just increase discoveries. It increases false discoveries.

This is the same thing that happened in academic research, factor investing, and machine learning competitions. Cheap experimentation leads to a flood of results that look significant but aren’t.

The difference is that now it’s happening to anyone with a laptop and a ChatGPT subscription.

The classic rite of passage of the aspiring quant/systematic trader is to fall into the Backtest Cycle of Doom:

Come up with a strategy idea (or ask AI for one)

Run a backtest

Results look mediocre

Optimise parameters

Add a filter

Change the timeframe

Try a different universe

Combine some indicators

Suddenly... amazing backtest

It feels like you’re converging on something real.

But you’re not. You’re overfitting noise.

Each tweak is another implicit hypothesis test. Run enough of them and statistics guarantees you’ll find something that looks incredible. If you test 10,000 parameter combinations, some of them will show spectacular performance. Not because they work, but because there will always be something that fit the past somewhere in the noise.

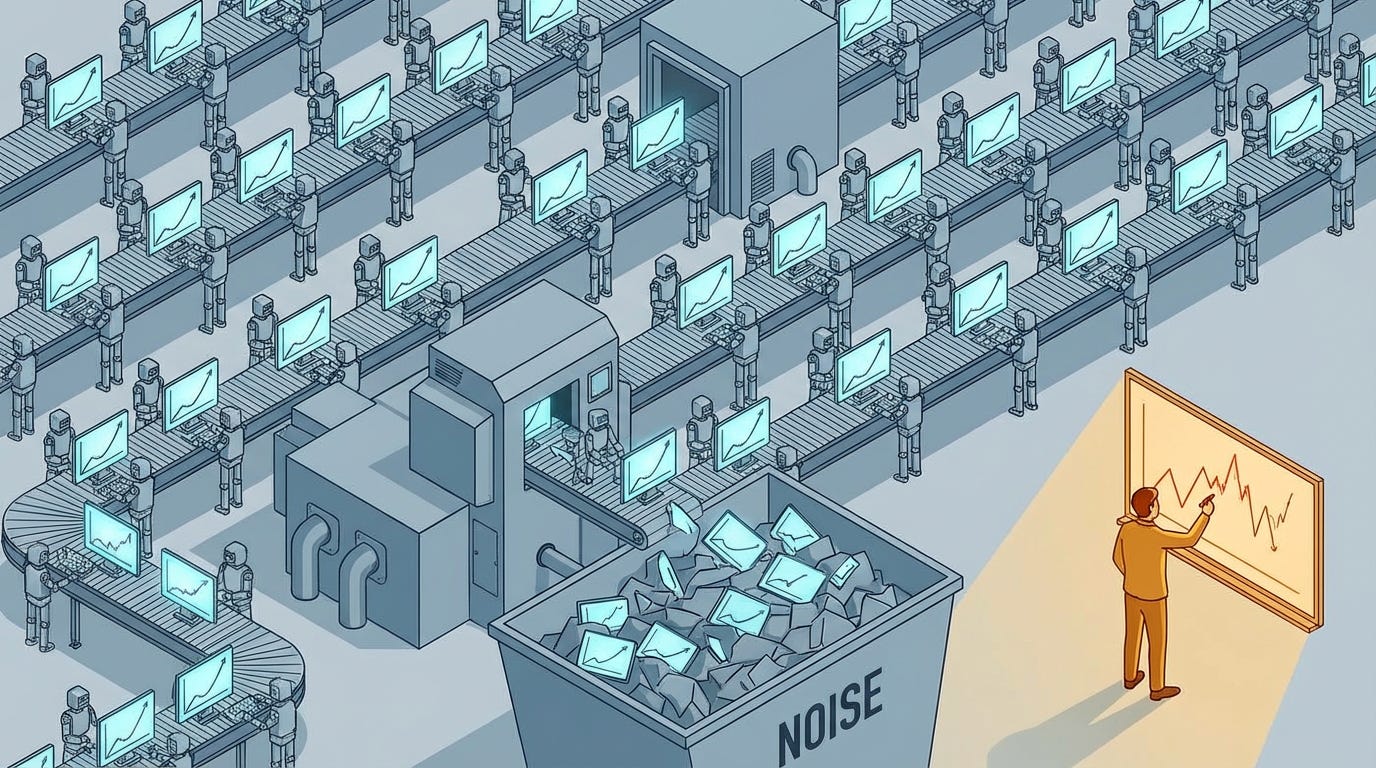

AI accelerates every step of that loop. So instead of running 20 bad tests manually, you can run 20,000 with AI assistance.

And you’ll find something that looks fantastic.

Nice looking backtests are cheap now.

This is worth sitting with for a moment.

In the age of AI, a beautiful backtest proves almost nothing.

The probability that some parameter combination produces an amazing equity curve approaches certainty as the number of combinations you try increases.

This is just the multiple testing problem, and it’s been well understood in statistics for decades. If you run 1,000 tests at 5% significance, you’d expect about 50 false positives. AI just makes it trivially easy to run those 1,000 tests without even realising you’re doing it.

So the thing that used to be hard (producing a good-looking backtest) is now easy. Which means it’s no longer informative.

So what actually matters?

Before a backtest should even exist, there should be a question: why would the market pay me to do this trade?

The classic engineer’s mistake is to skip this question (I’m speaking from experience).

Engineers work on problems with well-understood physics, and we tend to assume that trading strategies belong in that category. We go straight to data, straight to code, straight to backtests.

Eventually, we realise that that’s backwards. We have to do the work to discover the underlying physics first.

Answering the question “why would the market pay me to do this trade” requires thinking about incentives, market structure, behavioural biases, institutional constraints. In other words, a theory of edge.

Good edges usually exist because of something structural:

Risk premia (getting paid to bear risk others want to offload)

Liquidity provision (facilitating trades for price-insensitive participants)

Behavioural biases (systematic under- or over-reaction)

Institutional constraints (index rebalancing, regulatory requirements)

Take momentum as an example. It shows up in backtests across practically every asset class. But if you discover momentum through data mining without understanding why it exists (career risk, slow capital reallocation, persistent underreaction), you’ll goal-seek a set of parameters that maximise momentum returns in a backtest.

At best, that’s wasted effort. Worst case, you’re destroying a perfectly good edge by overfitting to noise.

AI has no theory of edge.

LLMs know how strategy rules are described. They know what backtests look like. They know what indicators people use.

But they don’t know which ideas are rubbish. They don’t know which edges have real economic drivers behind them. They can’t distinguish a statistical mirage from a structural opportunity.

Why?

Because the internet is flooded with trading nonsense, and the model learned from all of it.

When you ask AI for trading strategies, you’re getting the average of the internet’s worst ideas, dressed up in professional-looking code, spouted with unwarranted conviction.

AI is a tool for doing the technical work faster. I use it every day. But the technical work was never the hard part.

The real scarcity is judgment.

In the AI era, the scarce resource isn’t coding ability, data access, or computing power. Those are now abundant (although my compute bill is taking a hammering these days… another side effect of the AI era).

What’s scarce is scepticism, research discipline, statistical thinking that recognises uncertainty, and market intuition.

Judgement, basically.

I reckon AI will absolutely transform quantitative trading. But probably not in the way people expect. It won’t magically create thousands of profitable traders. It will create millions of people capable of producing beautiful backtests, most of which will be illusions.

The traders who do well will still be the ones who start somewhere different. Not with a backtest, but with that question: why would the market pay me to do this trade?

That question is the beginning of every real edge. And it’s still hard to answer.

At Robot Wealth, we spend a lot of time on exactly this question. What actually causes profitable trading opportunities to exist? Who’s on the other side, and why will they keep showing up? Without that foundation, quantitative research is just automated pattern hunting.

There is no substitute for domain expertise. I see a million part time wannabe quants that have never worked a day in the business running “quant” substacks. They lack domain expertise but plenty of retail idiots willing to pay them for the substack so they can finance their “quant” trading. 🤣

Great post!